AI agent observability: The developer's guide to agent monitoring

AI agent observability: The developer's guide to agent monitoring

Most "agent observability best practices" content reads like a compliance checklist from 2019 with "AI" pasted over "microservices." Implement comprehensive logging. Establish evaluation metrics. Create governance frameworks. Not a single line of code. No mention of what happens when your agent silently picks the wrong tool on turn 3 and you need to figure out why.

Agent monitoring needs two things: dashboards that show you what's happening across all your agents, and traces that show you exactly why something went wrong in a specific run. Most tools give you one or the other. Here's what it looks like when you have both.

What is agent observability?

Agent observability is end-to-end visibility into what your AI agents are doing: which models they call, which tools they invoke, what decisions they make at each step, and how those decisions affect the final output.

Traditional application monitoring tracks requests, errors, and latency. That works for stateless HTTP services where each request is independent.

AI agents are different. A single agent run might involve multiple LLM calls, tool executions, sub-agent handoffs, and multi-turn reasoning loops, all dependent on each other. When the output is wrong, the failure could be anywhere in that chain: a bad tool response, a context window overflow, a model selecting the wrong function, or a handoff losing state.

Agent observability tracks the full reasoning chain across these interactions. You can't evaluate agent quality, debug agentic workflows, or control costs without this level of visibility.

Why traditional monitoring fails for AI agents

Standard APM tools will tell you that POST /api/chat returned 200 in 4.2 seconds. They won't tell you that inside that request, the agent made 5 LLM calls, the third one picked the wrong tool, the tool returned stale data, and the model faithfully summarized garbage.

If your agent monitoring plan is "log everything and figure it out later," you'll have a dashboard full of counts and averages with no way to dig deeper. An agent that returns the wrong answer might have made 12 LLM calls, executed 4 tools, and handed off to a sub-agent before producing garbage. Aggregate metrics tell you that error rates went up. They don't tell you where the reasoning went wrong.

What you need is structured tracing built on a standard convention, so dashboards, traces, and alerts all speak the same language.

The OpenTelemetry standard for agent observability

The OpenTelemetry gen_ai semantic conventions define the standard for instrumenting AI agent systems. Instead of custom logging, every AI operation produces a structured span with a consistent set of attributes. Here are the core operations defined by the convention:

Span operation | What it captures |

|---|---|

| A single LLM call: model, prompt, response, token counts |

| The full lifecycle of an agent run, from task to final output |

| A tool/function call: name, input, output, duration |

These compose into a span tree:

POST /api/chat (http.server)

└── gen_ai.invoke_agent "Research Agent"

├── gen_ai.request "chat claude-sonnet-4-6" ← initial reasoning

├── gen_ai.execute_tool "search_docs" ← tool call

├── gen_ai.request "chat claude-sonnet-4-6" ← process results

├── gen_ai.execute_tool "summarize" ← second tool call

├── gen_ai.request "chat claude-sonnet-4-6" ← decides to hand off

└── gen_ai.execute_tool "transfer_to_writer" ← handoff via tool

└── gen_ai.invoke_agent "Writer Agent"

├── gen_ai.request "chat gemini-2.5-flash"

└── gen_ai.execute_tool "format_output"This isn't proprietary. It's an open standard, and any observability platform that follows it can ingest these spans. The span op follows the pattern gen_ai.{operation_name} - for manual instrumentation, gen_ai.request covers all LLM calls. Auto-instrumentation from SDKs may produce more specific ops like gen_ai.chat or gen_ai.embeddings depending on the API being called. Because these are structured spans rather than unstructured logs, they power both dashboards and trace views.

Key metrics for AI agent monitoring

Before diving into tooling, here's what you should be tracking for any AI agent in production:

Reliability metrics:

Agent error rate - percentage of agent runs that fail or return errors

Tool failure rate - which tools are unreliable, and how often they break agent runs

Latency (p50, p95) - per-agent and per-model, to catch regressions and slowdowns

Cost metrics:

Token usage - input, output, cached, and reasoning tokens per model. Cached and reasoning tokens are subsets of the totals, not additive. Get this wrong and your cost dashboards are fiction.

Cost per model - compare models doing similar work. You might discover

claude-sonnet-4-6costs $10.8K/week whilegemini-2.5-flash-litehandles the same volume for $645.Cost per user/tier - which users or pricing tiers are consuming the most AI resources

Quality metrics:

Tool call frequency - how often agents invoke each tool, and in what order

Token efficiency - average tokens per successful agent completion. If this number is growing, your prompts or context windows might be bloating.

Cache hit rate - what percentage of input tokens are served from prompt cache. If you've enabled caching and this isn't improving, something is wrong.

If you want to find out more about these metrics and why they matter, our post on the core KPI metrics of LLM performance is a great way to get started. Any agent observability platform that follows OpenTelemetry conventions should surface these from trace data.

Here's what this looks like in practice using Sentry as an example.

Auto-instrumentation for 10+ frameworks

Sentry auto-instruments the major AI frameworks in both Python and Node.js, including OpenAI, Anthropic, Google GenAI, LangChain, LangGraph, Pydantic AI, OpenAI Agents SDK, Vercel AI SDK, and more. No manual span creation needed. Install the package, enable tracing, and Sentry picks it up.

The entire setup:

import sentry_sdk

sentry_sdk.init(

dsn="YOUR_DSN",

traces_sample_rate=1.0,

)

# OpenAI, Anthropic, LangChain, LangGraph, Pydantic AI,

# Google GenAI -- all auto-instrumented when detected.That's it. Make an Anthropic or OpenAI call and the spans show up in Sentry.

Pre-built agent monitoring dashboards

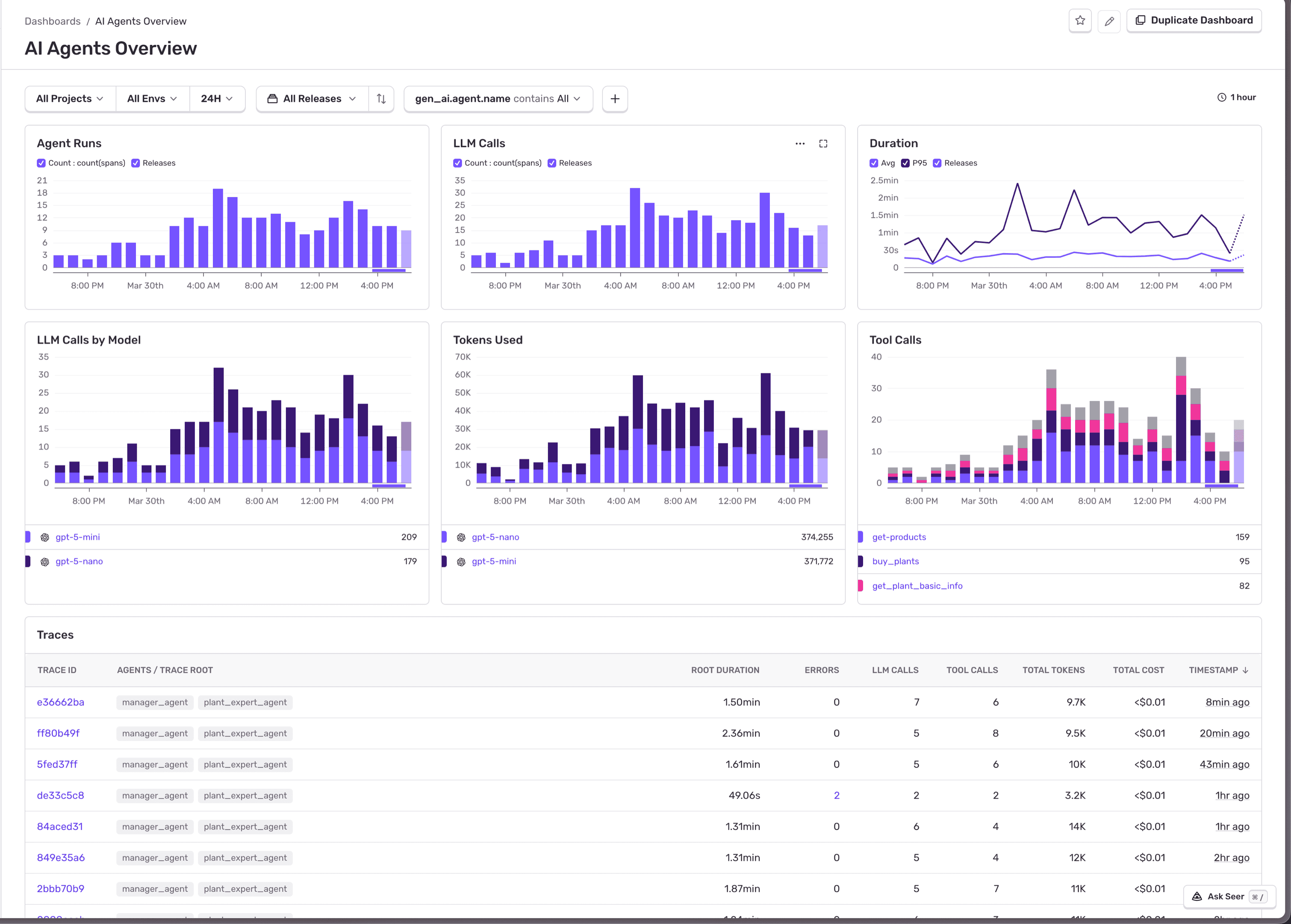

Most observability platforms offer pre-built dashboards for agent monitoring. Once instrumentation is in place, Sentry's AI Agents dashboard includes three views:

AI Agents Overview

Agent Runs, duration, total LLM calls, tokens consumed, tool calls. This is your "is everything healthy?" view.

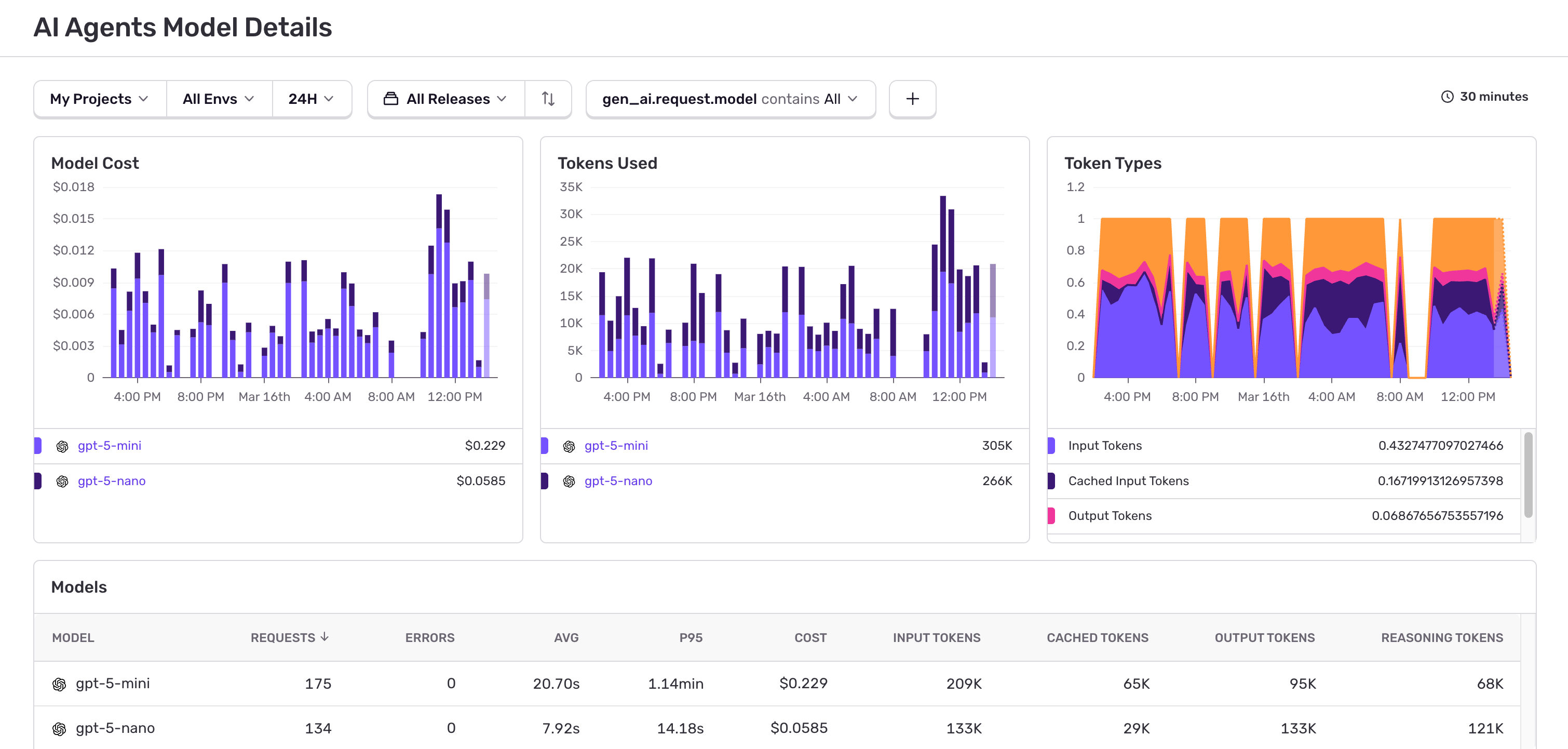

AI Agents Model Details

Per-model cost estimates, token breakdown (input/output/cached/reasoning), and latency. This surfaces the cost metrics above automatically.

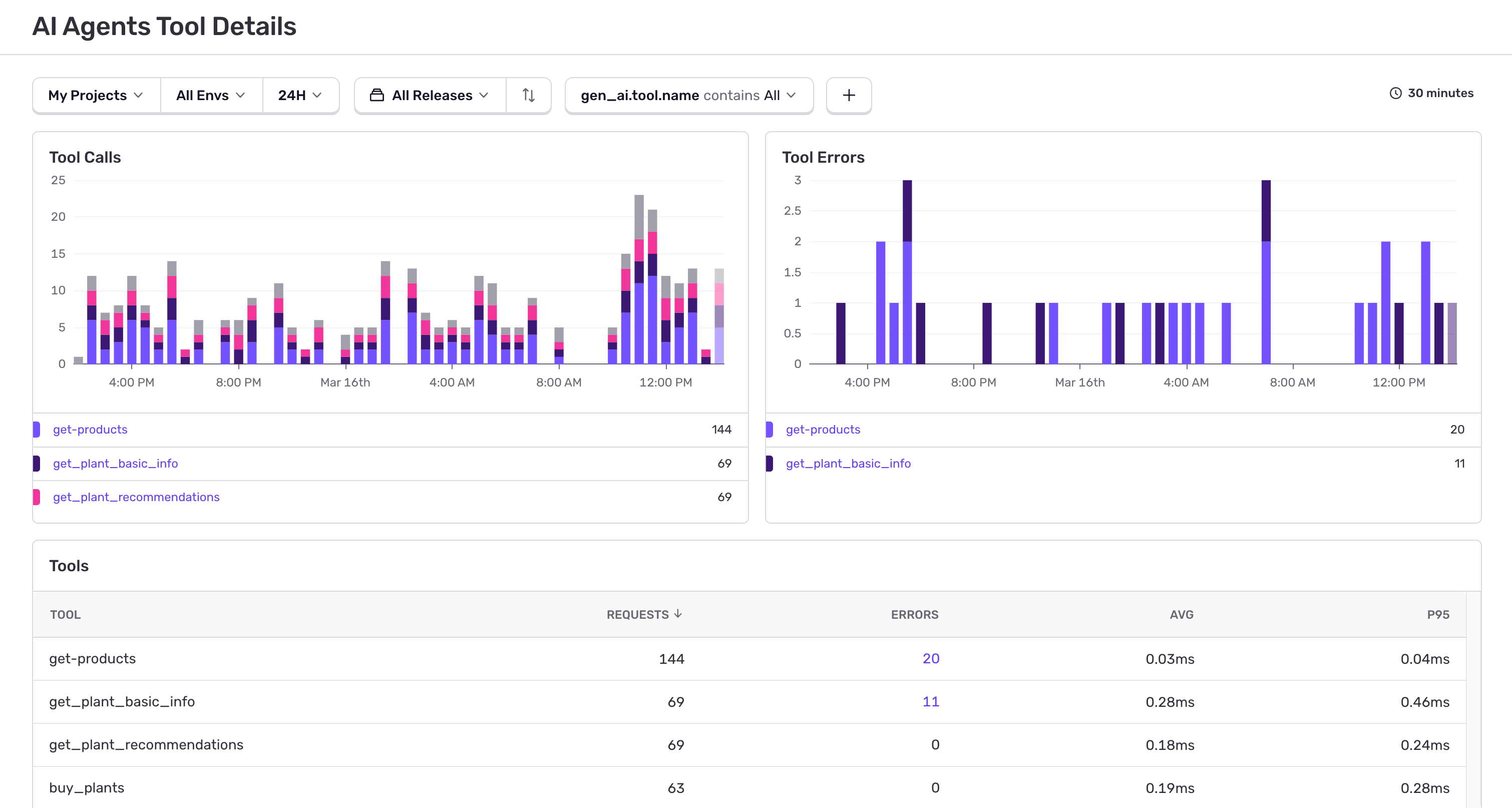

AI Agents Tool Details

Per-tool call frequency, error rates, and p95 latency. If your search_docs tool is failing 12% of the time, this is where you'll spot it before your users start complaining.

These dashboards are pre-built and available the moment spans start flowing. But they're aggregate views: per-model totals, per-tool error rates, overall agent run counts. They help you answer technical questions and show you what’s broken — but what about the deeper business-level questions?

Custom agent monitoring dashboards

Pre-built dashboards give you aggregate health signals. They don't tell you who's driving your AI costs, which features are worth the spend, or whether your caching strategy is actually saving money. For those questions, you need to slice trace data by your own dimensions: user tier, feature flag, experiment group.

Some platforms let you build custom queries against span data. With the Sentry CLI, you can script this - and because it has an agent skill system, AI coding assistants like Claude Code can build dashboards for you:

"Who are my most expensive users?"

sentry dashboard create 'AI Cost Attribution'

sentry dashboard widget add 'AI Cost Attribution' "Most Expensive Users" \\\\

--display table --dataset spans \\\\

--query "sum:gen_ai.usage.total_tokens" \\\\

--where "span.op:gen_ai.request" \\\\

--group-by "user.id" \\\\

--sort "-sum:gen_ai.usage.total_tokens" \\\\

--limit 20"Which pricing tier is eating my AI budget?"

Tag your users with their plan, then group them in the dashboard based on that tag

sentry_sdk.set_tag("user_tier", user.plan) # "free", "pro", "enterprise"sentry dashboard widget add 'AI Cost Attribution' "AI Cost by Tier" \\

--display bar --dataset spans \\

--query "sum:gen_ai.usage.total_tokens" \\

--where "span.op:gen_ai.request" \\

--group-by "user_tier" \\

--sort "-sum:gen_ai.usage.total_tokens"This is how you find out that free-tier users consume 60% of your AI budget. The same tagging pattern works for any dimension: team, feature_flag, experiment_group.

"Which agents are token-hungry?"

sentry dashboard widget add 'AI Cost Attribution' "Avg Tokens per Agent" \\

--display table --dataset spans \\

--query "avg:gen_ai.usage.total_tokens" "count" \\

--where "span.op:gen_ai.invoke_agent" \\

--group-by "gen_ai.agent.name" \\

--sort "-avg:gen_ai.usage.total_tokens"If your "Research Agent" averages 15K tokens per run while "Summarizer Agent" averages 2K, you know where to focus prompt optimization.

"Is my prompt caching actually saving money?"

sentry dashboard widget add 'AI Cost Attribution' "Cache Hit Rate" \\\\

--display line --dataset spans \\\\

--query "sum:gen_ai.usage.input_tokens.cached" "sum:gen_ai.usage.input_tokens" \\\\

--where "span.op:gen_ai.request"If your cached-to-total ratio isn't improving after enabling Anthropic prompt caching, something is wrong with your prompt structure.

Why tracing matters for agent monitoring

Dashboards show aggregates. Traces show decisions.

A dashboard tells you error rates went up or latency spiked. A trace tells you which agent, which model call, and which tool was responsible.

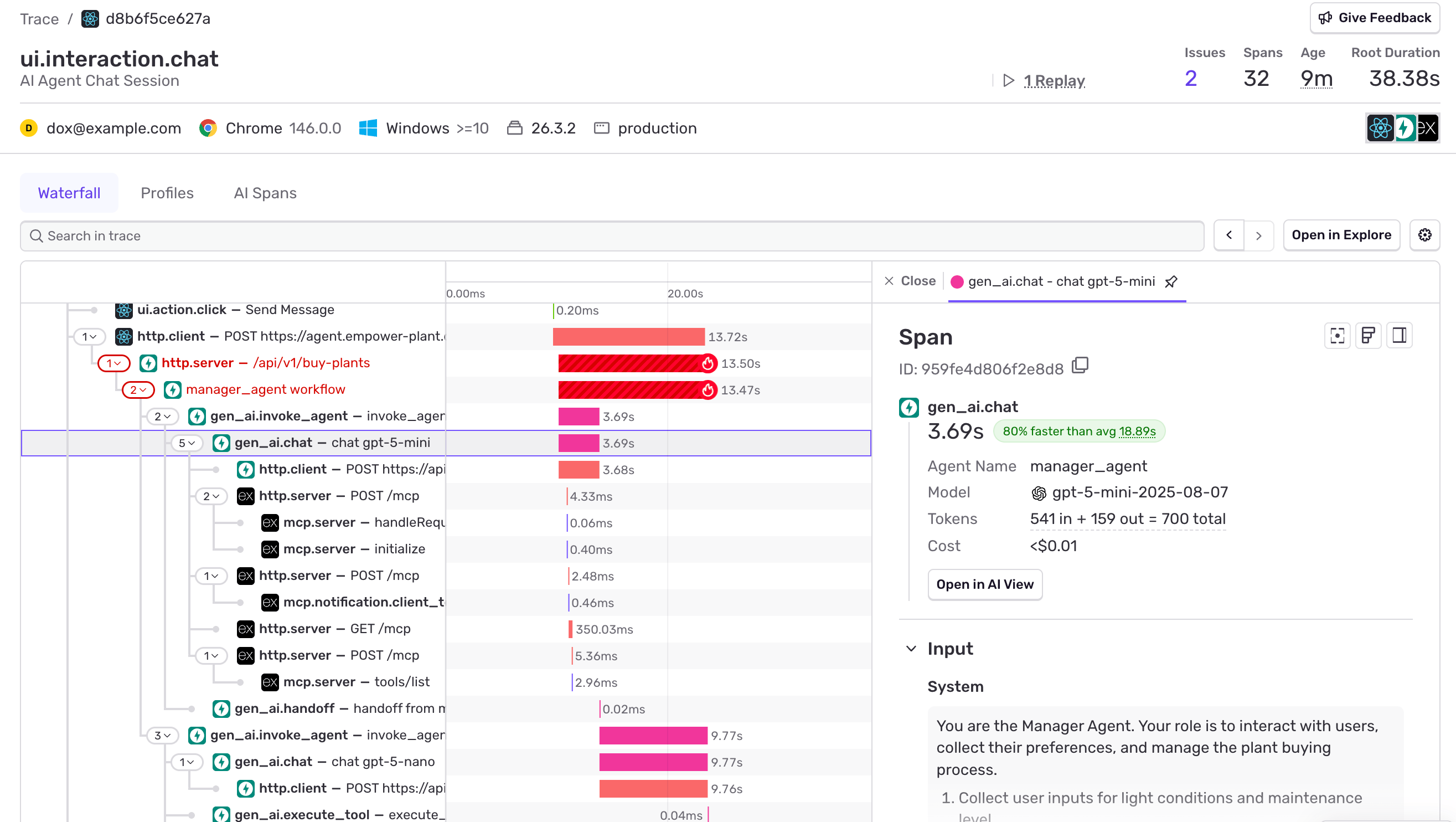

Distributed tracing already captures the full span tree for a request: browser interactions, HTTP calls, server-side routing, database queries. Agent observability plugs into this. Your gen_ai.* spans show up as children inside existing traces, so the LLM calls, tool executions, MCP server interactions, and sub-agent handoffs sit right alongside your regular application spans. No separate system needed.

This is what makes it powerful. You're not looking at agent data in isolation. You're looking at the full request, from the user's click to the final tool response, with agent decisions as one layer in the stack.

Here's what this looks like in Sentry's trace view:

Distributed trace showing a full agent workflow from browser click through LLM calls, MCP server interactions, and agent handoffs

One request, end to end: from a user clicking "Send Message" in the browser, through the API layer, into agent orchestration with LLM calls and MCP server interactions, through a handoff to a second agent. Click any span to see the model, tokens, cost, and system prompt.

Agent observability best practices

Regardless of which platform you use, you should:

Use structured tracing, not logs. Unstructured logs can't reconstruct the reasoning chain. OpenTelemetry

gen_aispans give you a searchable, filterable span tree that powers dashboards and trace views simultaneously.Sample AI traces at 100%. Agent runs are span trees. Sampling drops entire executions, not individual calls. If your

tracesSampleRateis below 1.0, you're losing complete agent runs. Use atracesSamplerto keep AI routes at 100% while sampling everything else at your baseline.Track cost by user, not just by model. The pre-built dashboard shows per-model totals. You need per-user and per-tier attribution to make business decisions about rate limiting, pricing, and model routing.

Monitor tool reliability separately. A tool that fails 5% of the time might not show up in your overall error rate, but it's causing 1 in 20 agent runs to produce bad output. Your agent monitoring dashboard should surface per-tool error rates separately.

Connect AI monitoring to your full stack. An agent failure might be caused by a slow database query, a failed external API call, or a frontend timeout. Isolated AI monitoring can't surface these root causes.

Full-stack agent observability

Agent observability is most powerful when it sits on top of a full APM platform, connecting agent spans to errors, performance traces, session replays, and logs across your entire stack.

Isolated AI monitoring gives you gen_ai spans in a silo. You can see that your Research Agent made 8 LLM calls and cost $0.04. What you can't see is that the reason it made 8 calls instead of 3 is because your search_docs tool is hitting a slow Postgres query that's timing out, causing the agent to retry with a rephrased query each time.

When agent spans share context with the rest of your stack, the full picture comes together. Errors surface with their full span tree attached. Session replays show the user interaction that triggered a bad agent run. Upstream infrastructure problems (a degraded vector database, a flaky external API, etc.) show up in the same trace as the agent behavior they caused.

Four steps to first trace

Install the SDK:

pip install sentry-sdkornpm install @sentry/nodeInitialize with tracing enabled

Make an AI call, spans and dashboards populate automatically

(Optional) Install the CLI skill for your AI assistant:

Click to Copynpx skills add <https://cli.sentry.dev>

If your framework is auto-instrumented, you're done. If it's not, manual instrumentation takes about 10 lines of code per span type.

Try Sentry for free - AI monitoring is included on all plans.